Summary

Contents

Summary#

Contractual Trust#

- Contractual trust

Humans trusting a machine to fulfil a contract in a particular context

Trust, Distrust, and Lack of Trust#

Trust

A trusts B if:

A believes that B will act in A’s best interests; and

A accepts vulnerability to B’s actions; so that:

A can anticipate the impact of B’s actions, enabling collaboration.

Distrust

A distrusts B if:

A believes B will act against A’s best interests.

Lack of Trust

A lacks trust in B if either of the following is true:

A does not believe that B will act in A’s best interests; or

A does not accept vulnerability to B’s actions.

Lack of trust simply requires an absence of trust, where distrust instead requires A to believe B will act against A’s interests.

Contracts for AI#

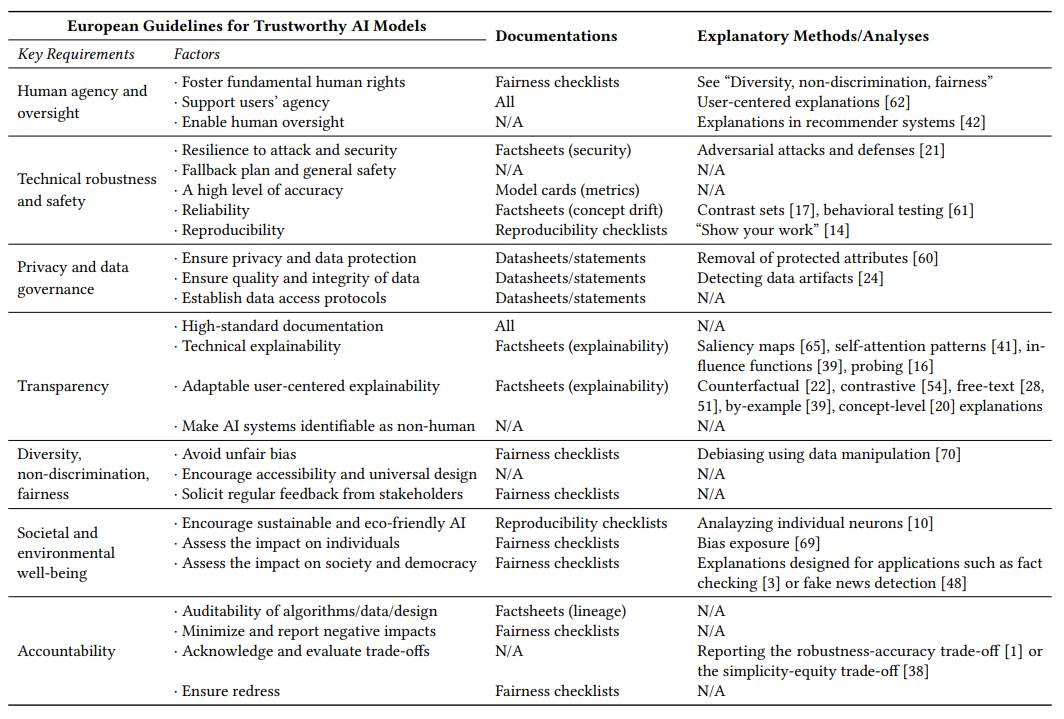

Fig. 1 The set of contracts that a AI should uphold to be considered trustworthy according to European guidelines. Source: Formalizing Trust in Artificial Intelligence: Prerequisites, Causes and Goals of Human Trust in AI#

Warranted and Unwarranted Trust#

| Trusted | Distrusted | |

| Trustworthy | Warranted Trust | Unwarranted Distrust |

| Not Trustworthy | Unwarranted Trust | Warranted Distrust |

Use, Misuse, Disuse, and Abuse of Automation#

Misuse

Using automation where it shouldn’t be used.

Caused by:

Unwarranted Trust

Disuse

Not using automation when it should be used.

Caused by:

Unwarranted Distrust

Abuse

Deploying automation where it shouldn’t be deployed.

Caused by:

Unwarranted Distrust on the part of the AI designer.

Can arise if:

the AI designer has (unwarranted) distrust in human operators,

believes their AI solution will be better (unwarranted trust in their AI).

designers or decision makers are biased in favour of automation,

designers or decision makers are arrogant.

Power#

- Power

The ability to control our circumstances

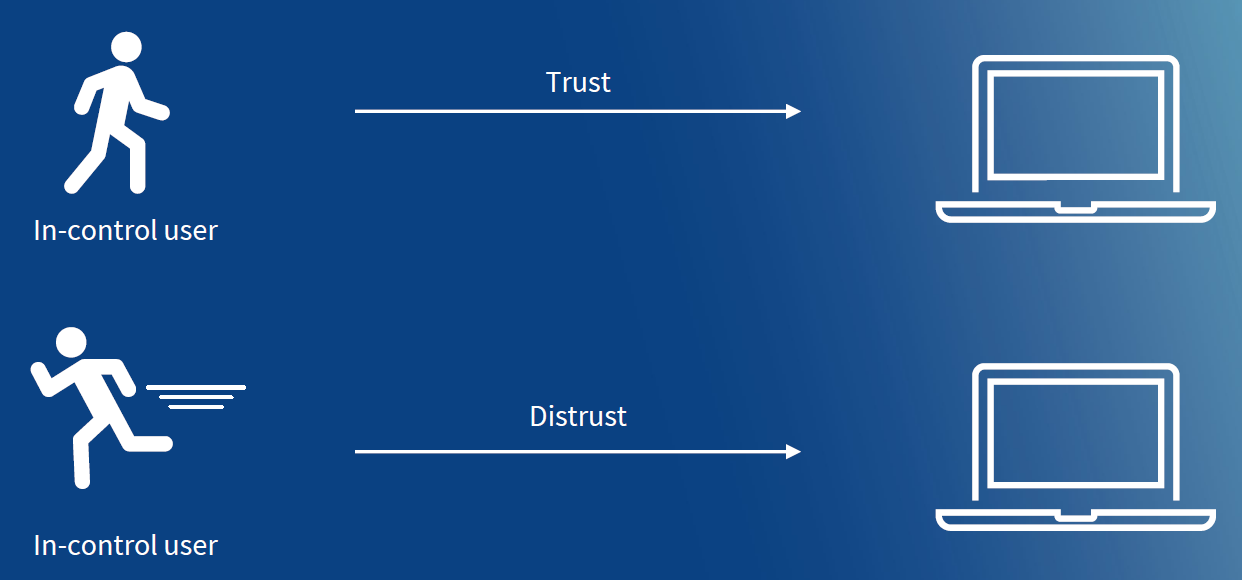

User Control#

Fig. 2 An ethical relationship between a user and machine, where the user has the power to disengage if distrust is established.#

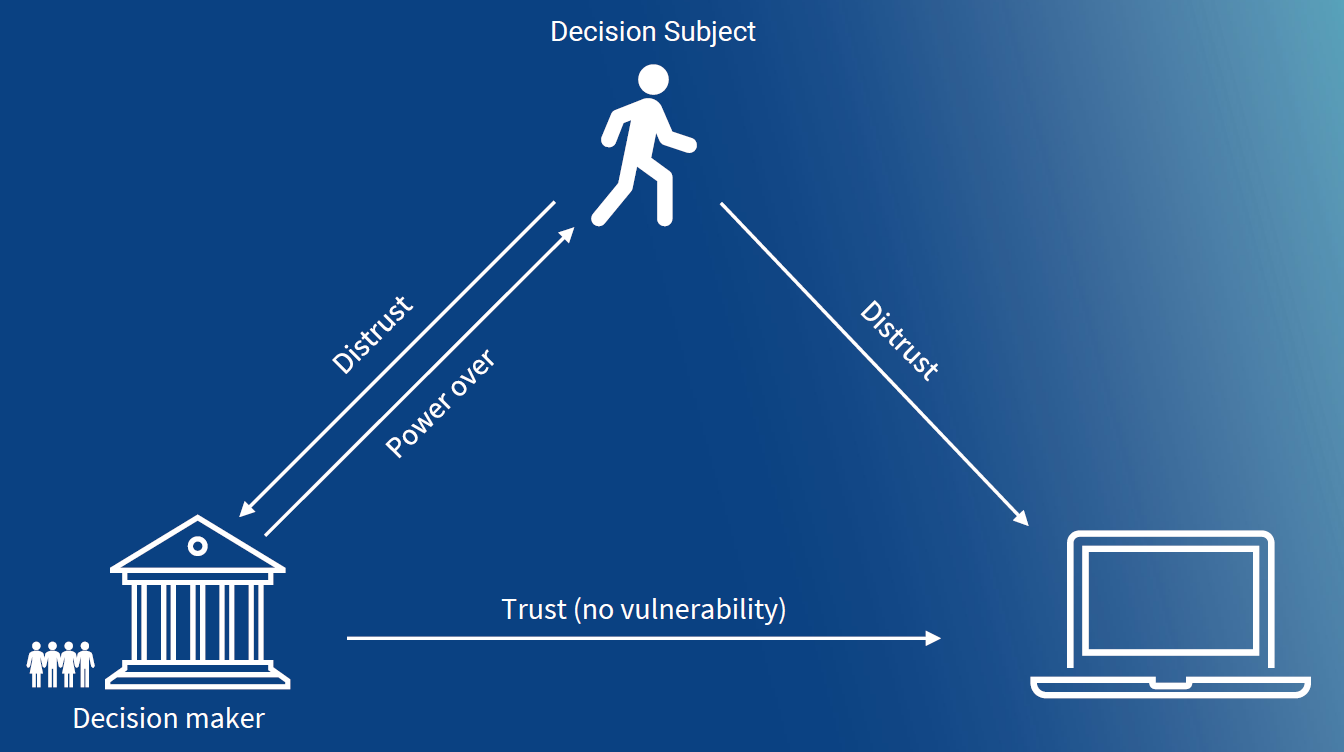

Fig. 3 An unethical power relationship between a user (decision subject), decision maker, and machine; where the user distrusts the machine, the decision maker has the power to enforce it’s use, and the decision maker is not vulnerable to negative consequences that arise from the machine’s decisions.#